VLM Benchmarks

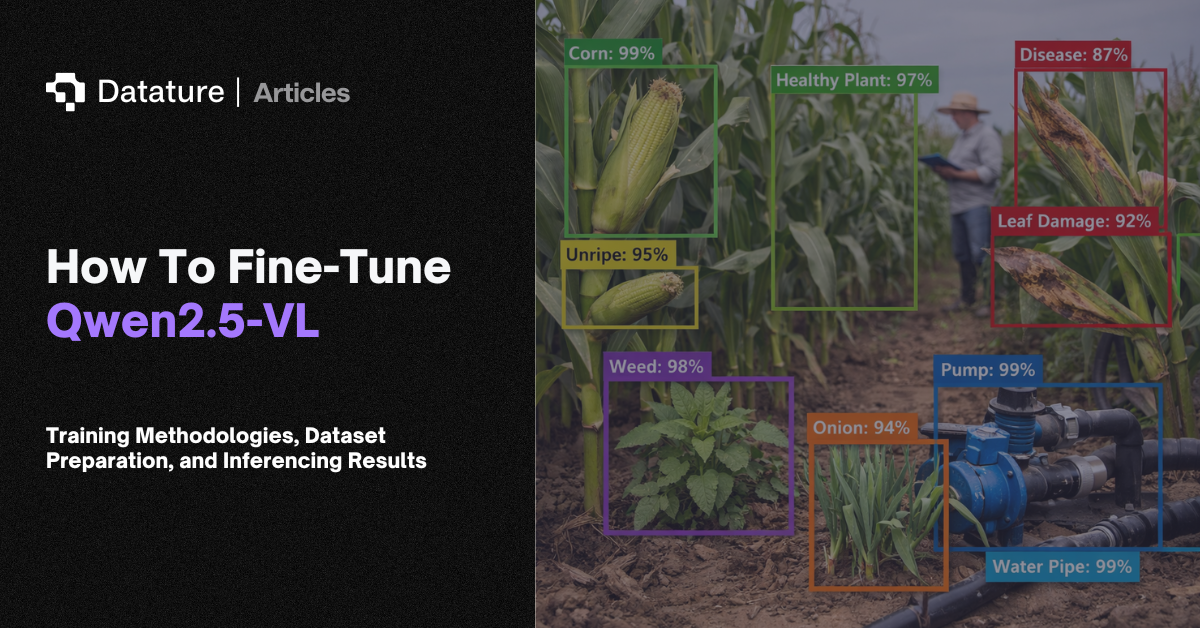

VLM benchmarks are standardized tests that measure how well vision-language models perform across different capabilities. Just as ImageNet measures image classification and COCO measures object detection, VLM benchmarks test whether a model can answer questions about images, reason about visual scenes, read text in documents, and avoid hallucinating objects that are not there. Benchmarks matter because VLMs are used for so many tasks that a single accuracy number is meaningless; you need scores across multiple dimensions.

Key benchmarks include MMMU (Massive Multi-discipline Multimodal Understanding), college-level questions across 30 subjects requiring domain expertise; MMBench, a bilingual benchmark testing perception, reasoning, and knowledge; VQAv2, open-ended visual question answering on natural images; TextVQA, questions requiring reading text in images; DocVQA for document understanding; POPE for probing object hallucination; MM-Vet for evaluating integrated capabilities like OCR + spatial reasoning + knowledge; and SEED-Bench for measuring generative comprehension across 12 evaluation dimensions. Scores vary widely by model size: a 2B parameter model might score 40% on MMMU while a 72B model scores 70%.

When selecting a VLM for a production use case, benchmark scores help narrow the candidate list. A team building a document processing pipeline should weight DocVQA and TextVQA scores. A quality inspection team should prioritize POPE (hallucination resistance) and spatial reasoning benchmarks. Benchmark performance on general datasets does not guarantee performance on domain-specific data. Always evaluate on your own test set before deploying.

.png)