Multimodal Learning

Multimodal learning trains models to understand and reason across multiple data types at once: images, text, audio, video, depth maps, or sensor readings. Rather than processing each type in isolation, multimodal models learn how different modalities relate to each other. They discover that the word "dog" in a caption corresponds to a specific region in an image, or that a spoken instruction maps to a visual scene.

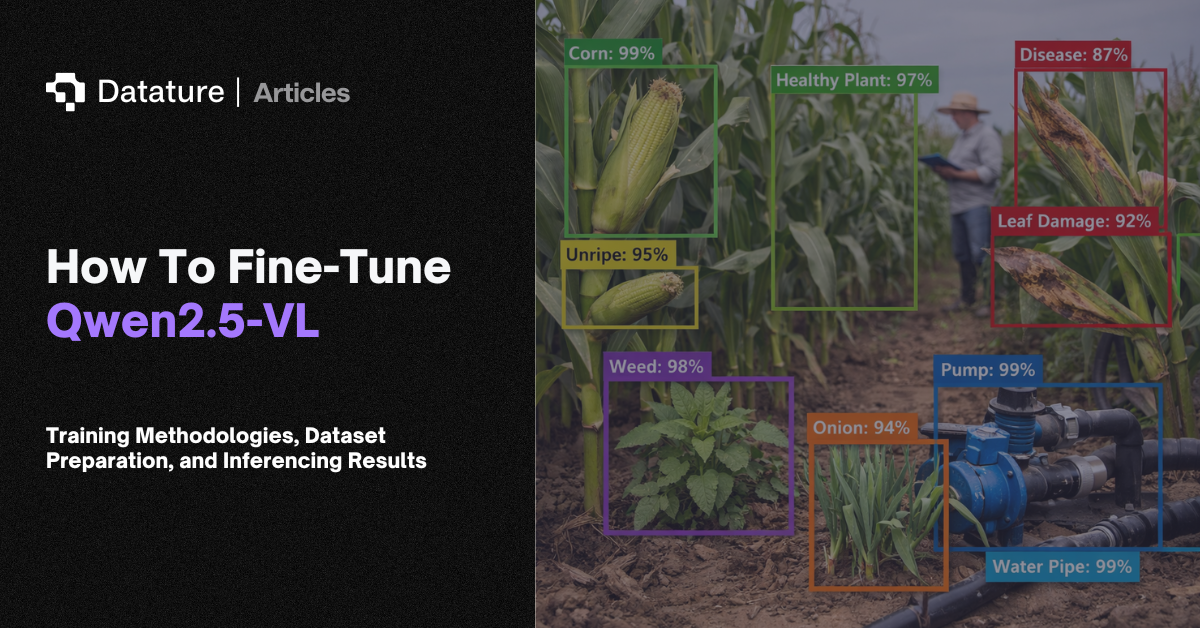

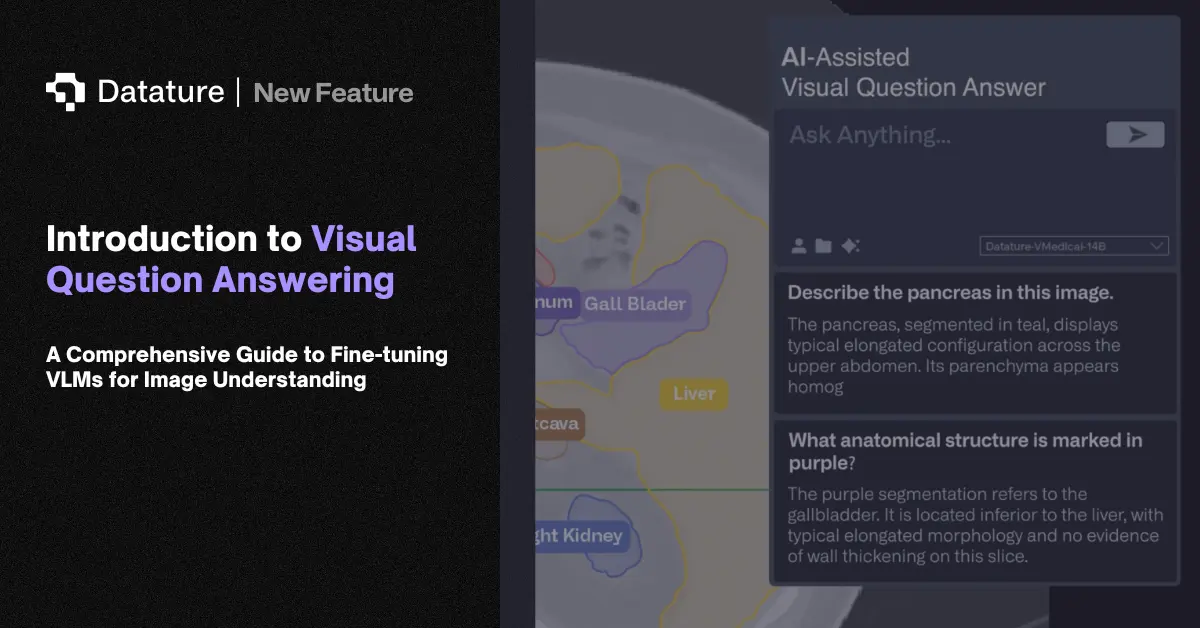

Early approaches like CLIP (Contrastive Language-Image Pre-training) and ALIGN learned shared embedding spaces by training on hundreds of millions of image-text pairs. The model pulls matching image-text pairs together in the embedding space and pushes non-matching pairs apart. More recent vision-language models (VLMs) like GPT-4V, Gemini, LLaVA, and Qwen2.5-VL go beyond embeddings to generate text responses about images. They interleave visual tokens with text tokens in a transformer, enabling visual question answering, image captioning, document understanding, and chain-of-thought reasoning over visual inputs.

Training approaches include contrastive learning (matching pairs in embedding space), cross-attention fusion (letting text tokens attend to image features), and model fusion (combining separately pre-trained encoders through lightweight adapters). Multimodal learning matters because real-world perception is inherently multi-sensory: autonomous vehicles combine camera, LiDAR, and radar data; medical AI combines imaging with clinical notes.