LoRA (Low-Rank Adaptation)

LoRA (Low-Rank Adaptation) is a technique for fine-tuning large neural networks without updating all their parameters. Instead of modifying the full weight matrices (which can have billions of parameters), LoRA freezes the pre-trained weights and injects small, low-rank matrices alongside them. During fine-tuning, only these small matrices are trained. This cuts GPU memory usage so much that you can fine-tune a 7B parameter VLM on a single consumer GPU that could never hold the full model's gradients.

LoRA works by decomposing weight updates into two small matrices. If the original weight matrix is d x d, LoRA adds matrices A (d x r) and B (r x d) where r (the rank, typically 4-64) is much smaller than d. The effective update is BA, which gets added to the original weight at inference time. This means zero additional inference latency after merging. QLoRA extends the idea by quantizing the base model to 4-bit precision before applying LoRA, reducing memory even further. Common hyperparameters include the rank (r), alpha (scaling factor), and which layers to target (attention projections, MLP layers, or all linear layers).

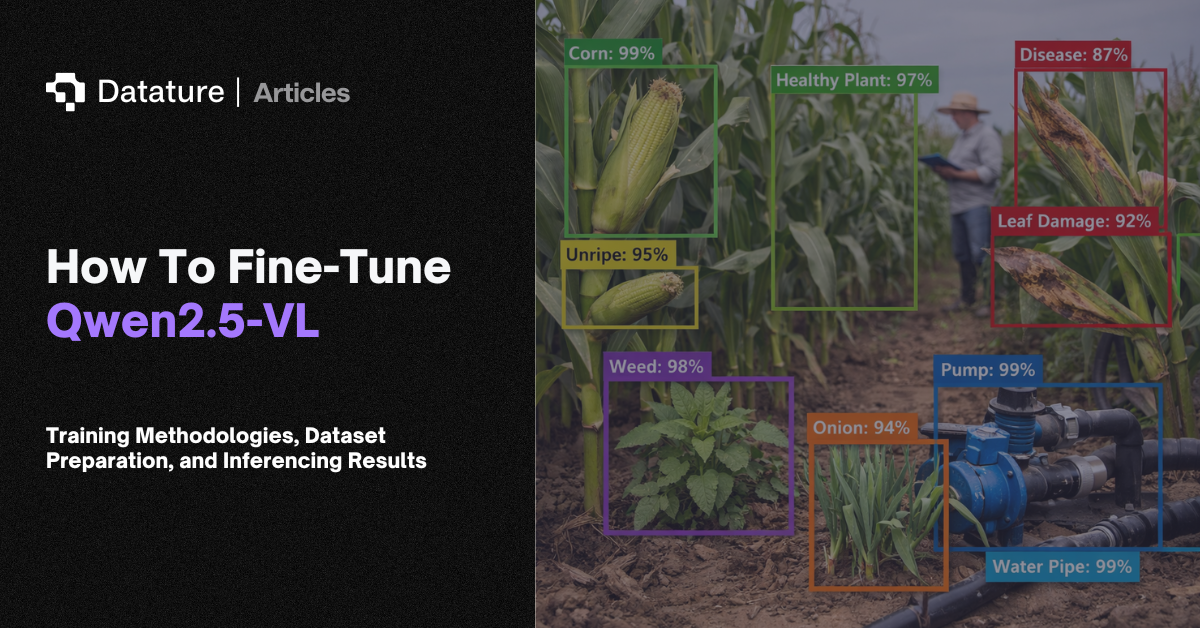

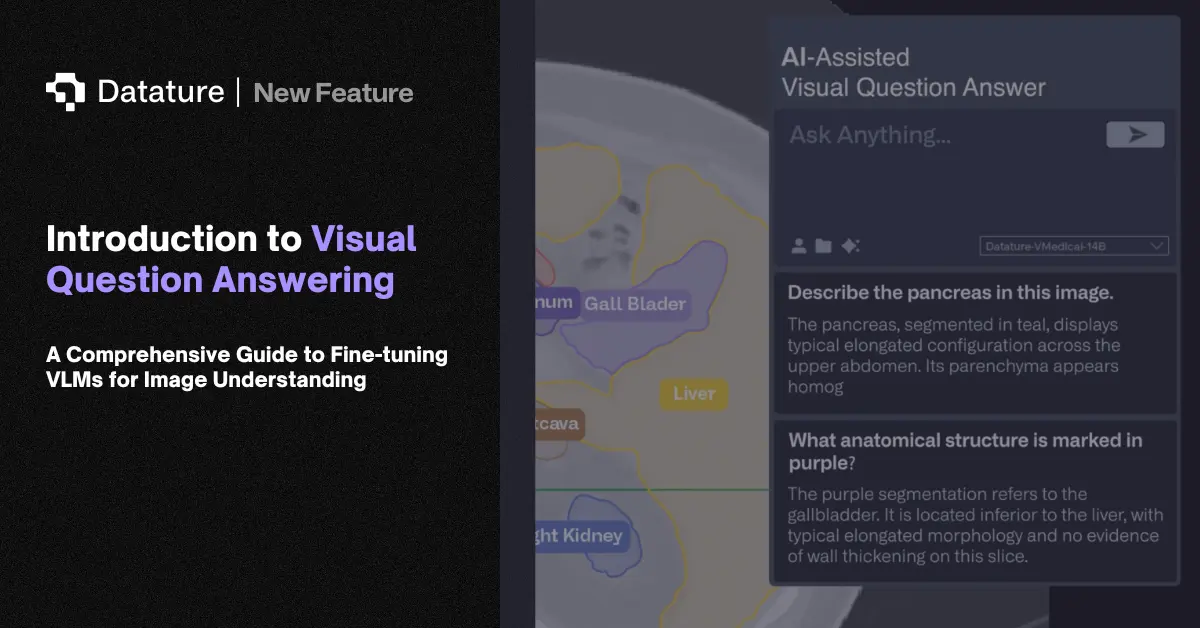

LoRA is the standard method for fine-tuning VLMs like PaliGemma, Qwen-VL, and LLaVA on domain-specific data. A manufacturing company can adapt a VLM to recognize their specific defect types with a few hundred annotated examples and a single A100 GPU. Medical teams tune general-purpose VLMs to their imaging modalities the same way. LoRA adapters are small files (tens of megabytes) that can be swapped at inference time, making it practical to maintain multiple domain-specific variants of a single base model.