Image Embeddings

Image embeddings are fixed-length numerical vectors that represent the visual content of an image in a compact form. A pre-trained neural network processes the image and outputs a vector (typically 256 to 2048 dimensions) that captures its semantic meaning. Images that look similar or contain similar objects end up with vectors that are close together in this embedding space, while visually different images are far apart.

The backbone network determines what the embeddings capture. ResNet or EfficientNet embeddings (trained on ImageNet classification) encode object categories and scene types. CLIP embeddings (trained on image-text pairs) encode both visual and semantic concepts, enabling text-based image search. DINOv2 embeddings (trained with self-supervision) capture fine-grained visual structure useful for segmentation and retrieval. The embedding is usually taken from the layer just before the classification head (the "penultimate layer" or a global average pooling output).

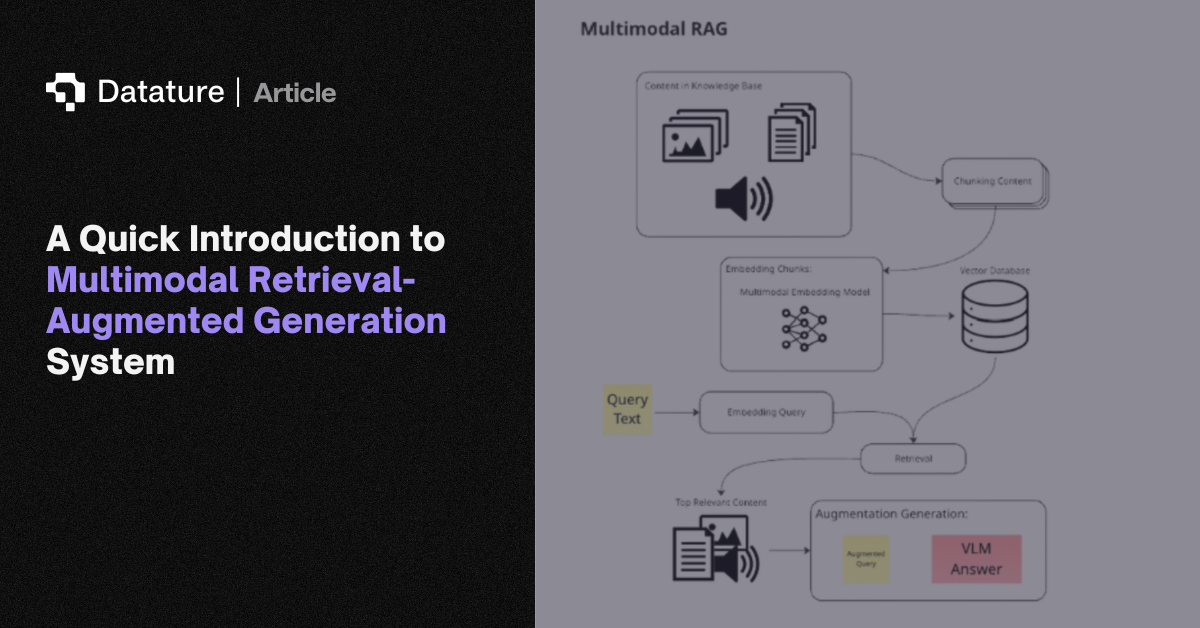

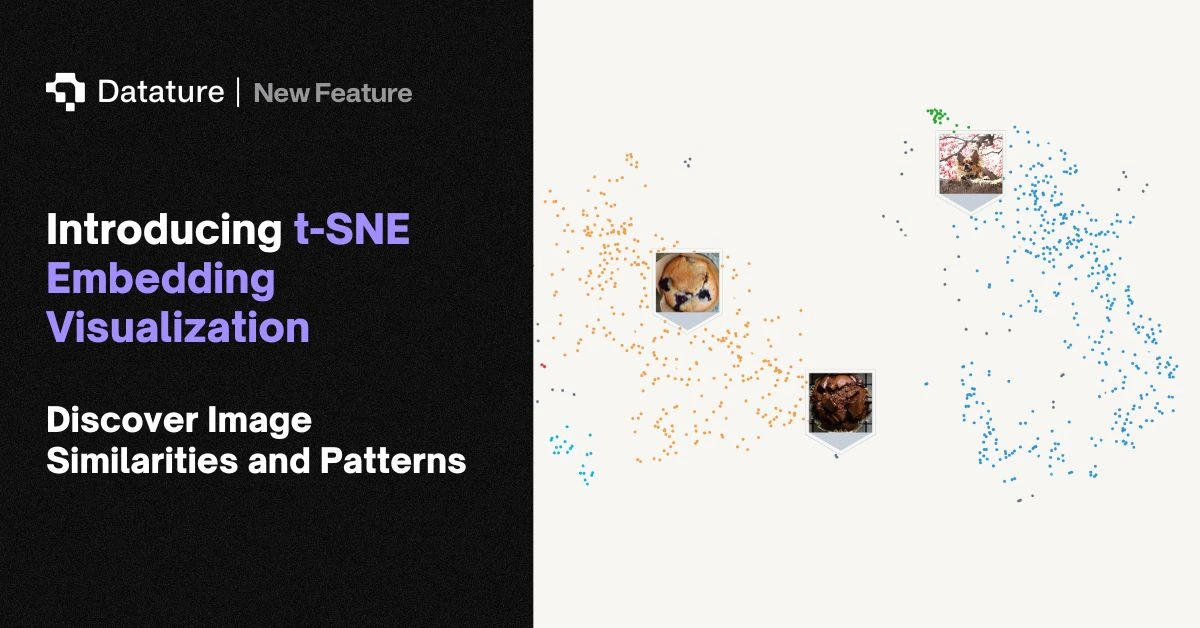

Practical uses include image similarity search (find visually similar products in a catalog), duplicate detection (identify near-duplicates in a dataset), clustering (group unlabeled images by visual content), retrieval-augmented generation (find relevant reference images for a VLM), and t-SNE/UMAP visualization (plot a dataset's visual distribution in 2D to spot class overlap or outliers). Datature supports embedding visualization for dataset analysis.