TFLite / LiteRT

TFLite, recently rebranded as LiteRT, is Google's framework for running machine learning models on mobile phones, microcontrollers, and other edge devices. It takes a trained TensorFlow or JAX model and converts it into a compact flat-buffer format optimized for low-latency inference on hardware with limited memory and compute power.

The conversion process supports several optimization techniques. Post-training quantization reduces model weights from 32-bit floating point to 8-bit integers, cutting model size by roughly 4x and speeding up inference on CPUs that lack dedicated floating-point units. Quantization-aware training goes further by simulating quantization during training, which preserves more accuracy. TFLite also supports hardware delegates that offload computation to specialized accelerators like the GPU, the Hexagon DSP on Qualcomm chips, or Google's Edge TPU.

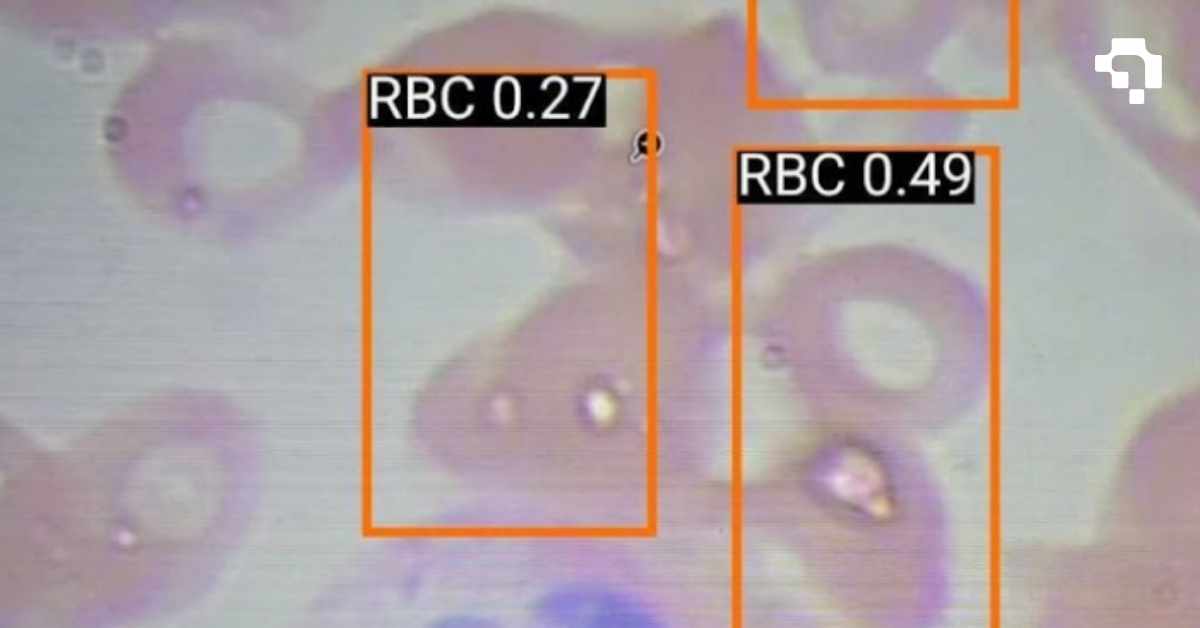

For computer vision, TFLite is widely used in mobile applications like on-device object detection, barcode scanning, face mesh estimation, and real-time pose tracking. Models from the TFLite Model Zoo and MediaPipe provide ready-to-deploy solutions for common tasks. The main limitation is that not all TensorFlow operations are supported in TFLite, so complex custom architectures sometimes require operator mapping or fallback to CPU execution for unsupported layers.

.jpg)