Knowledge Distillation

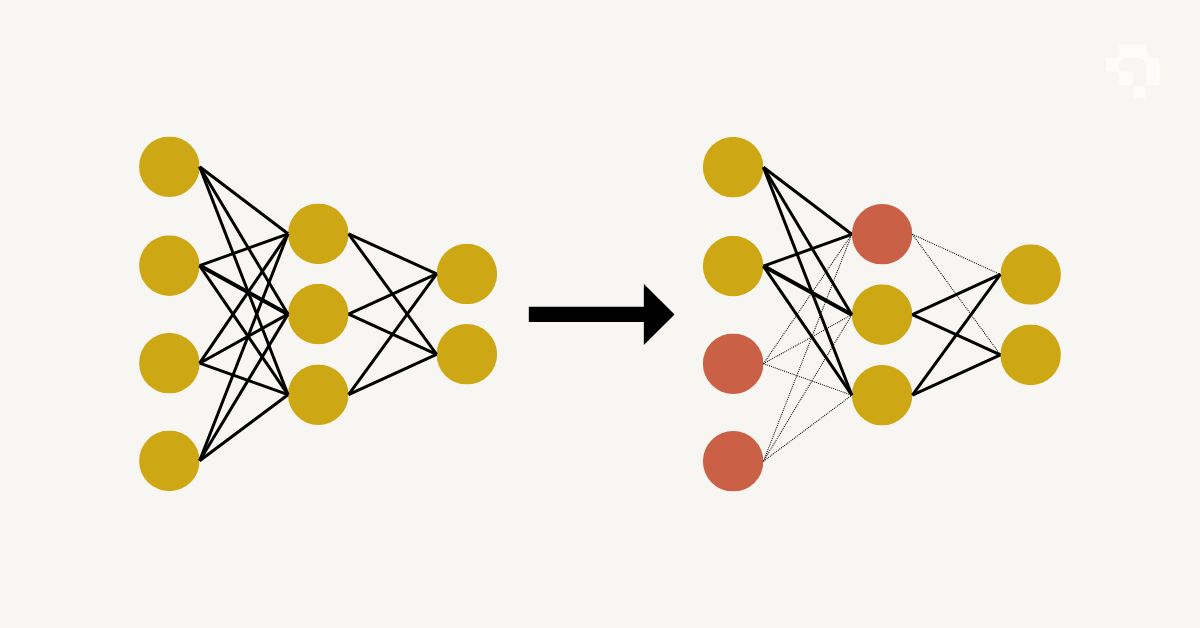

Knowledge distillation transfers the learned behavior of a large, accurate "teacher" model into a smaller, faster "student" model. The student is trained not just on the ground truth labels, but also on the teacher's output probabilities ("soft labels"). These soft labels carry richer information than hard labels: when a teacher says an image is 70% cat, 20% dog, and 10% fox, it encodes visual similarity relationships that a hard "cat" label doesn't capture.

The original method (Hinton et al., 2015) uses a temperature parameter to soften the teacher's output distribution, making the relative probabilities between classes more visible. The student's loss combines a standard cross-entropy term (against ground truth) with a KL divergence term (against the teacher's softened outputs). Feature-based distillation goes deeper: instead of just matching output probabilities, the student learns to reproduce the teacher's intermediate feature representations at specific layers. Relation-based distillation preserves the similarity structure between samples rather than individual outputs.

Knowledge distillation is standard practice for deploying vision models on edge hardware. A large ResNet-152 or ViT-Large teacher trains on a server, then distills its knowledge into a MobileNet or EfficientNet-Lite student that runs on phones or embedded devices. YOLO models use distillation during training to boost small-variant accuracy. The student typically recovers 95-99% of the teacher's accuracy at 5-20x faster inference speed.